Vestibule

Data Hall

Cooling

Data Hall

Electrical

Cooling distribution

GPU data hall

Electrical infrastructure

Initial deployment

400MW

Production-density compute, built one POD at a time.

The POD

24 data halls.One block.

25MW

45MW

65MW

Configurable density per POD.

The data hall

One hall.Ready to run.

Engineered for NVIDIA B300, GB300, and Vera Rubin.

The kit

5 modules.1 system.

Connected by a single thread, built as one.

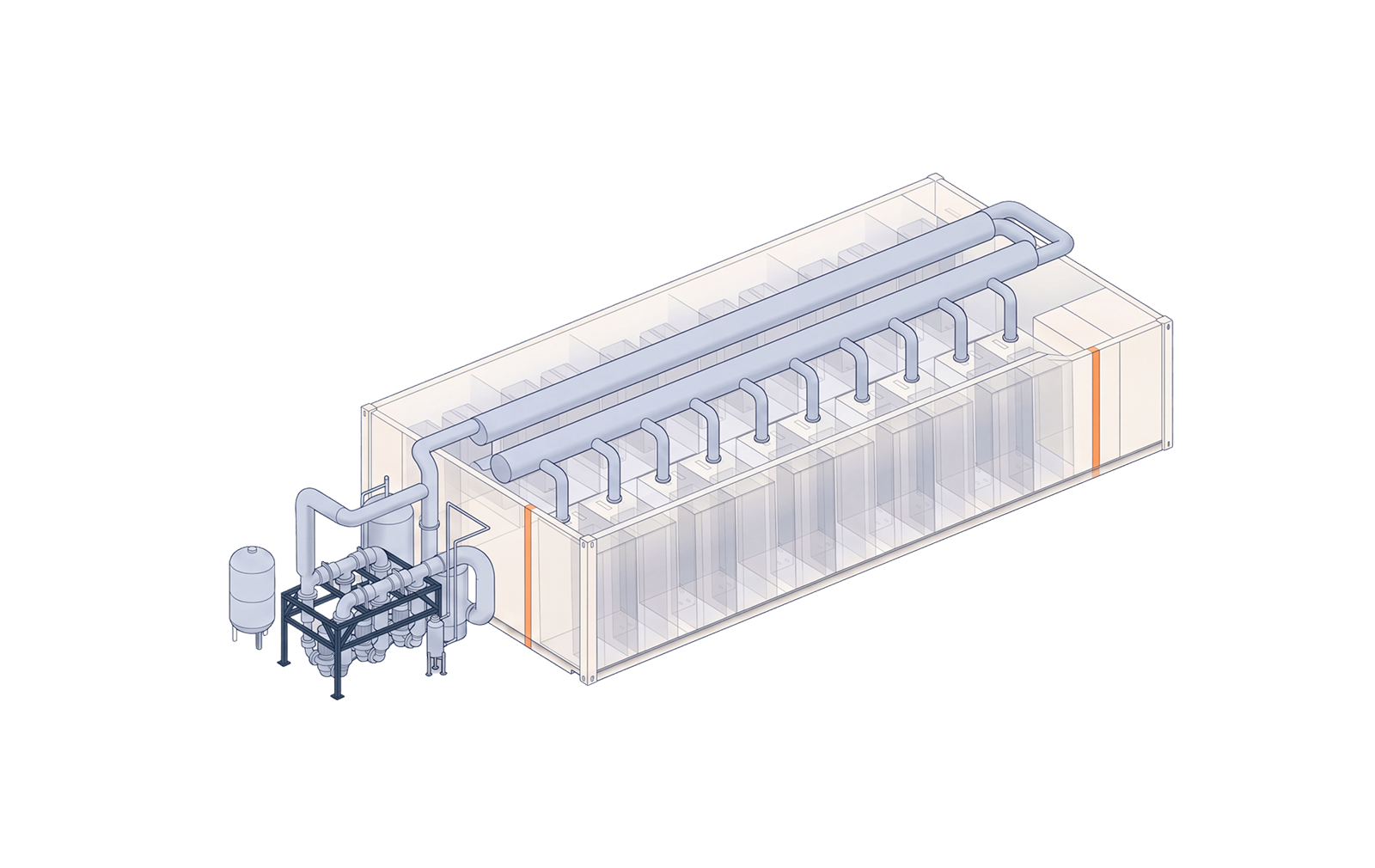

01 — Cooling distribution

Liquid cooling,factory-built.

Heat managed before it ever reaches a GPU.

02 — GPU data halls

Where thecompute lives.

B300, GB300, and Vera Rubin ready. Single-tenant by design.

03 — Electrical infrastructure

Power,kept upstairs.

Service the breakers without ever entering the data hall.

In days, not months

From truck to live.

Site preparation and module fabrication happen in parallel.

Chilled water mainsTwo parallel insulated pipes running the full length.

Direct-to-rack distributionLiquid at every rack position, branched at the factory.

Redundant pump skidThree pumps with VFDs — variable flow, full failover.

Production-density racksTwo parallel rack rows per module, cooled at the rack door.

B300 / GB300 / Vera RubinSized for current and next-generation NVIDIA hardware.

Single-tenant by designYour workload, your hardware, your container.

Stacked architectureDistribution panels and switchgear in their own dedicated module.

Single utility feedOne controlled entry point, cleanly managed.

Compute floor stays cleanNo panels, no cabinets in the rack room.